GMI Cloud

GMI Cloud is a GPU-focused cloud platform built for AI teams. It gives on demand access to powerful NVIDIA GPUs for training and running machine learning models. The platform helps developers scale faster and manage heavy AI workloads with less hassle.

Overview

GMI Cloud is a GPU focused cloud platform built for AI work. It helps developers and companies train run and scale machine learning models. The platform gives on demand access to NVIDIA GPUs and tools made for large models and fast inference.

At its core it offers a few main tools.

- GPU Compute Services. Users get access to NVIDIA H100 and H200 GPUs for training testing fine tuning and live use.

- Inference Engine. It runs models with low delay and scales resources up or down as needed.

- Cluster Engine. It lets teams manage container or bare metal GPU clusters with Kubernetes and Slurm support.

The console at console.gmicloud.ai is the web dashboard. That’s where users launch GPU instances manage resources and run AI jobs - kind of like other cloud dashboards.

About the company.

GMI Cloud is a venture backed startup. It focuses on connecting high end GPU hardware with AI builders who need steady and lower cost compute. It runs data centers in many regions and works closely with NVIDIA. The team has experience in cloud systems GPU tuning and business ready setups.

Main features.

High performance GPUs. Fast access to H100 and H200 Tensor Core GPUs with support for new hardware over time.

Flexible access. Bare metal or container options with pay as you go pricing no long deals.

Fast networking. InfiniBand helps distributed training run with low delay and high data flow.

Managed tools. The Inference Engine scales models for production use. The Cluster Engine manages containers Kubernetes clusters and bare metal nodes with access control and live tracking.

Security and support. The system is SOC 2 audited and offers usage tracking and performance data in real time.

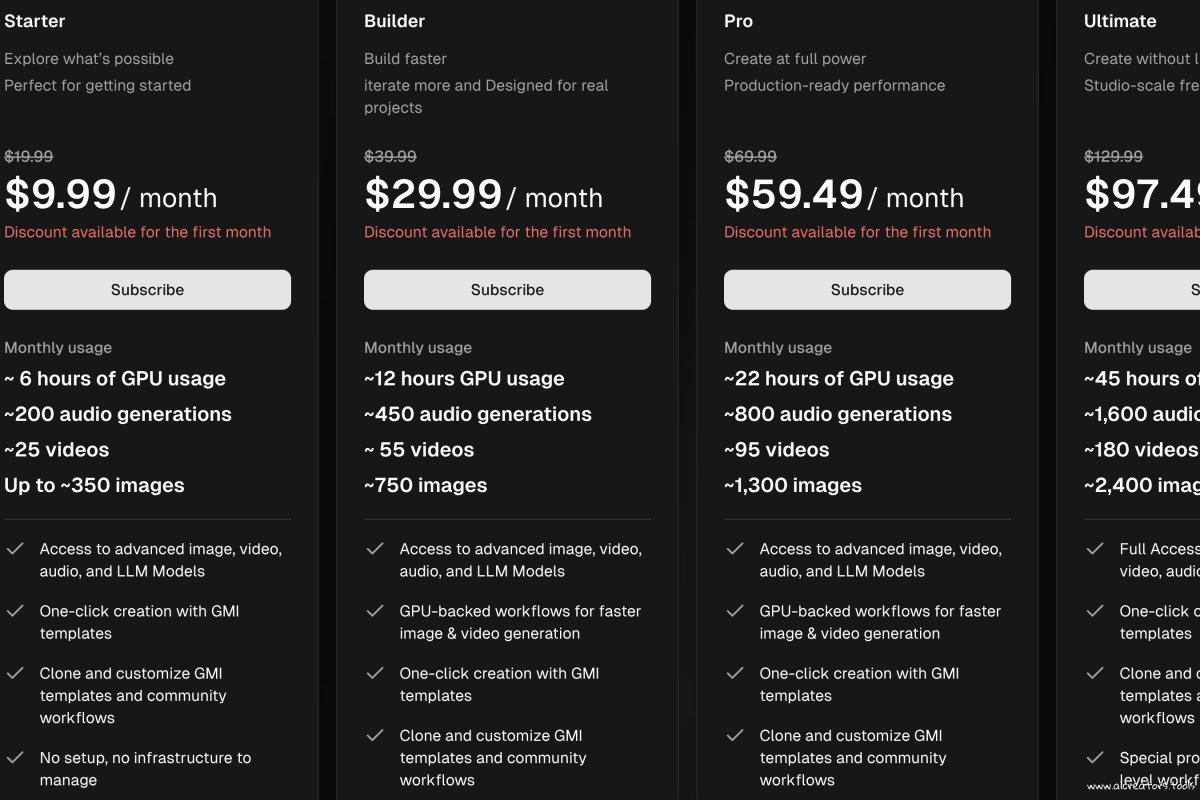

Pricing.

It uses pay as you go rates. Rates as per February 2026:

NVIDIA H100. Around 2.10 to 2.98 per hour.

NVIDIA H200. Around 2.50 to 3.98 per hour.

Prices change by setup region and usage type. Bigger teams can ask for custom pricing.

Who uses it.

AI startups that need strong GPUs without long contracts.

Data science teams training large language models.

Companies running real time inference systems.

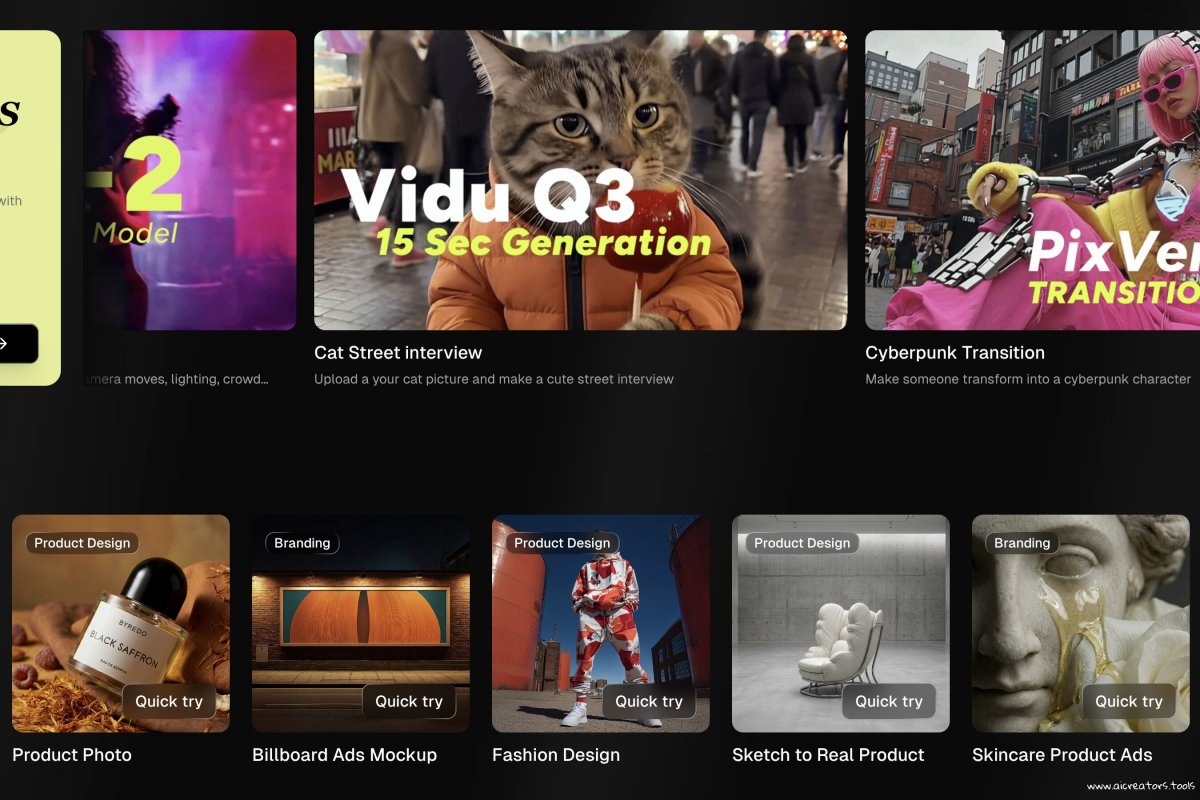

Creative AI teams building video or live apps.

Unlike large cloud providers GMI Cloud focuses only on GPU based AI systems. That focus can lower training and serving costs. It can also give faster access to hardware and better speed for heavy AI jobs.

And since it works closely with NVIDIA the setup feels NVIDIA esque with tight hardware support and fast networking for big model training or real time use.

Tags

Paid Unknown License Web-based #Creative AI SuitesLinks

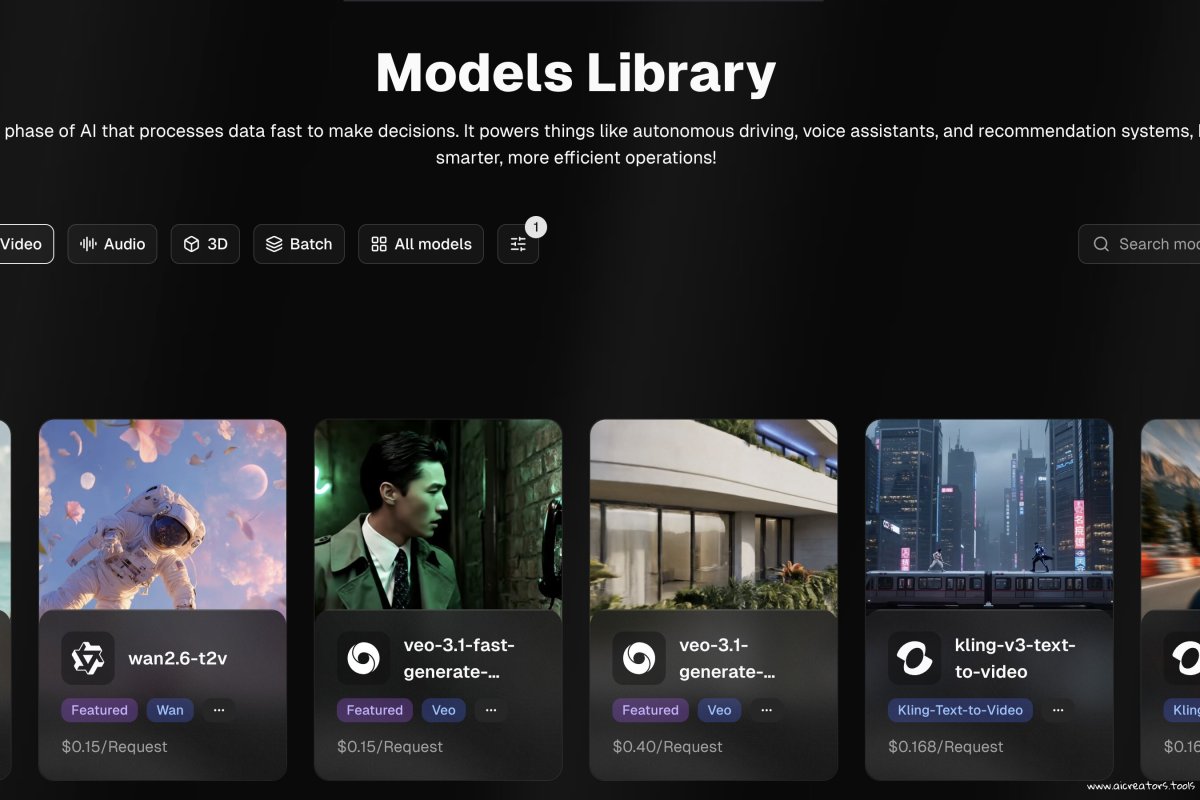

This tool offers the following AI models:

This list may not be exhaustive as new models keep dropping and are added to platforms all the time.

Useful Links

No additional links available for this tool.

This page was last updated on February 26, 2026 at 3:46 AM